- 3 MIN READ -

A couple of weeks ago I was speaking with an international partner who asked why we would want to measure time spent learning. We spoke a bit about the origin of time spent learning coming from the credit system, but they couldn’t see a current use for their clients.

Two weeks later, they came back with a use case and we began to set it up. It’s as though this measure of success has taken on a new life with eLearning.

Whereas the original concept came out of a need to standardize education so that a student from one university could say that they had an equivalent of learning in a subject with another student from another university, the use in eLearning has expanded and is often more related to the use of analytics to assist in understanding the effectiveness of course material.

Take video, for example: When you put video content into your eLearning course, how do you know if the learner watched the video? Whereas a follow-up learning assessment can tell you what the learner knows, it cannot verify that the learner watched the video.

Completion settings can also be helpful, but still do not provide a complete picture. If I embed a video into a Page activity in Moodle or Totara Learn, I can use the completion settings to track whether or not the learner clicked on the activity. I still cannot know if the learner watched the video.

With Time Spent Learning as a measure, though, you can gauge how long a learner has actually spent on that page and get a better sense of whether or not the entire video was viewed.

Here’s how it works:

With Lambda Analytics, there are two time trackers: One tracks how long a learner spends in course, the other tracks how long a learner spends in an activity.

So, as a content creator, I might add three resources to a course section, a 5-minute introduction video, a 10-minute read, and a 15-minute content-rich video. I would therefore expect learners to spend at least 30 minutes in this course section.

With Time Spent Learning as a measure, I could then monitor whether or not users have spent the expected amount of time on a given activity.

If I find, for example, that learners are spending 2-5 minutes on a 15-minute video and then failing the assessment, then I could fairly hypothesize that the video activity is failing its purpose and I need to adjust how I offer content.

If, on the other hand, I find that learners are spending 1 hour on a 15-minute video, I may surmise that the content might need to be chopped up into smaller sections or be supplemented with interactivity to help the learner digest the material.

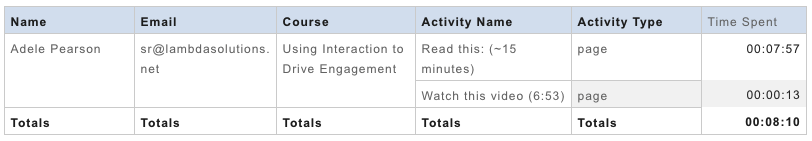

Here’s what a time-spent learning report could look like:

In the above example, I can see that this learner did not spend the expected time on the activities in this course. In looking at these results, I would then check for the following patterns:

- Have other learners spent the expected amount of time on these activities?

- Is there a common amount of time where learners click out of the activity?

- Have these learners passed/failed the learning assessments?

After doing a bit more analysis, I would probably want to enhance the learning experience.

For more on using video in eLearning, check out our upcoming Master Class and Lambda Lab.

Extra tip: It’s considered best practice to add the time to the title of a reading, listening or video resource. This helps the learner know what is expected of them, and it will also help you measure whether or not they actually spent the expected time on the activity.

Contact our knowledge staff to begin your eLearning success journey.