If you’ve been nodding along in data analysis meetings while secretly Googling ‘What is learning analytics?’ under the desk, this article’s for you.

Contents:

(Source: Undraw)

(Source: Undraw)

1. What is learning analytics?

Defining analytics

There are a whole bunch of ways to define analytics. Here’s a simple, general definition:

Learning analytics is about collecting traces that learners leave behind and using those traces to improve learning.

This is a nice definition of analytics for education, partly because it uses plain English, but also because it alludes to the cyclical nature of the analytics process.

By running pattern-producing algorithms on past work, it’s possible to highlight pressure points, spikes in performance, and areas of unusual change in your eLearning course. This supports better targeting of resources so that designers, admins, or instructors can make focused refinements to learning content—before the data-gathering stage begins again.

It makes sense to start at the beginning. So if you’re new to analytics and want to dive right in, this free eBook is for you - LMS 101: Learning Analytics.

Reporting

When organizations talk about data analytics, they’re usually talking about reporting. Reporting tools, such as Lambda Analytics, are the platform through which most people access analytics on the daily.

Reports are visual or statistical descriptions of some aspect of your analytics data set. They can be created for nearly any purpose—most often to demonstrate an insight that a data analyst has found while experimenting with your data set, or to update people on useful learning metrics.

For example, you might receive a bi-weekly analytics report on the most and least popular content, highest and lowest attainment in quizzes, time spent learning, or which courses are being fully completed.

This makes analytics reports a great way to shed light on exactly what learners are doing, giving educators, administrators, HR, and wider stakeholders insights into the reality of learning inside your institution.

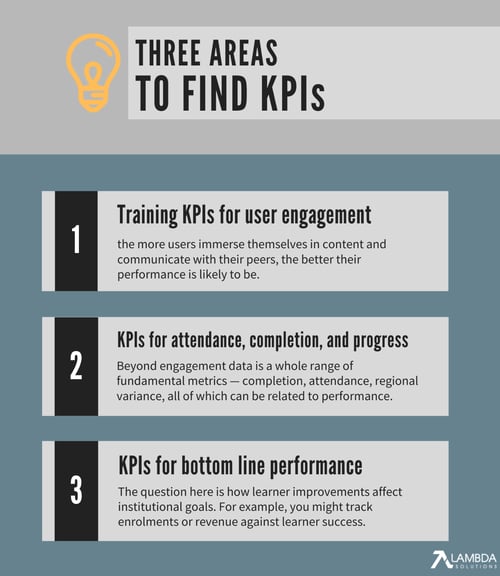

Key Performance Indicators (KPIs)

To translate data from its raw state into a persuasive story about how learning is (and should be) happening on a course, data analysts need to develop a set of metrics by which to measure performance.

Key Performance Indicators are the term for these measurements, which can be applied to reports and other analytics outputs. The exact KPIs you need will depend on the overall objectives of your organization, as well as the data question that’s being asked.

Typical examples of KPIs include:

- Pass/fail rates

- Applications and acceptance rates

- Student satisfaction percentage

- Student/faculty ratio

- Departments within budget

- Freshmen retention

For instance, you might be interested in whether analytics data supports recent changes you’ve made to your enrollment process. To answer this data query, you can use your analytics platform’s filters to develop a KPI, then run a series of reports that demonstrate progress in that area.

A good way to keep analytics reporting simple is to develop reports based on a single set of KPIs. For other tips on efficient reporting, watch our definitive webinar: Top 5 Tips for Keeping Reporting Simple.

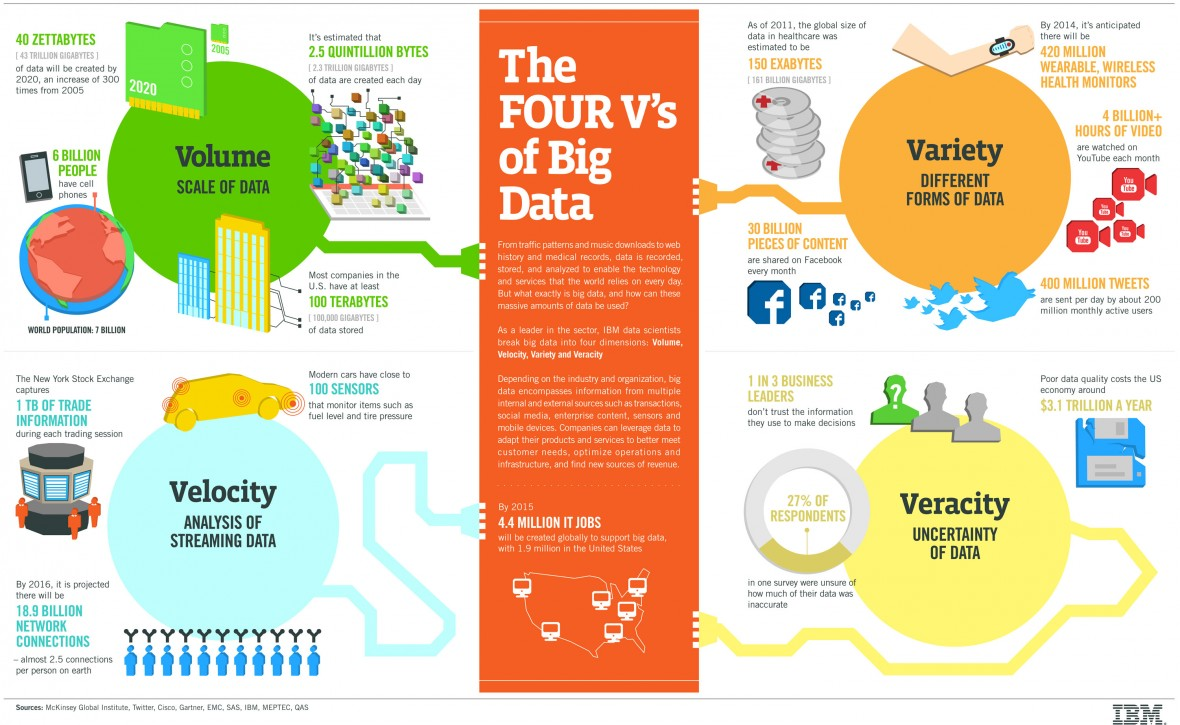

2. Why do people keep talking about ‘Big Data’?

| Big Data often conjures the idea of thousands of data points forming pleasing-looking patterns, such as this map of 2015 Thanksgiving flights by Google (Source: GoogleTrends). |

What’s so ‘big’ about it?

You probably won’t get far in a conversation about analytics in education before somebody brings up the concept of Big Data.

The idea of Big Data derives from the long digital trails we leave behind us as we go about our daily online business. Almost every interaction a user has with a learning management system (or other educational space) leaves some kind of footprint—think logging on and off, browsing activities, completing quizzes—which, across a whole student body, aggregates to a lot of digital traces being left behind.

The term is often attributed to any large data set, but for data to be truly ‘big’, the set should be at least one terabyte (TB). With the average day in 2020 producing around 2.5 quintillion bytes of information (that’s up to 1.7MB of data created per second for every person on earth), producing enough data clearly isn’t a problem.

|

| Today, many educational organizations can use analytics to predict performance, as well as learn from past work (tbmcg.com). |

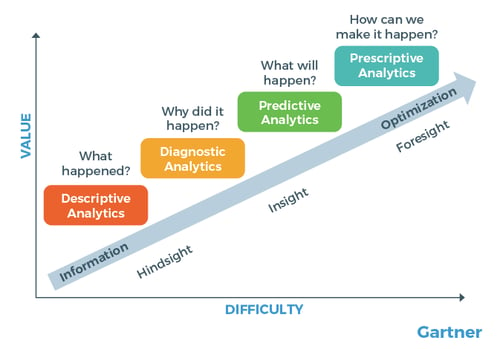

Predictive analytics

Predictive analytics takes the power of Big Data and uses it to focus on the future. This is a change for many people in the training and education industry, who will mostly encounter retrospective analytics—looking at what’s already happened.

The information held within Big Data means that today, analytics speaks not only on past performance, but can give insights into future behaviour, too.

According to most industry experts, learning analytics can now be divided into four phases:

- Descriptive analytics—using data analysis to tell a story of past performance.

- Diagnostic analytics—using data to answer questions about why things happened as they did.

- Predictive analytics—using Big Data to follow trends, and suggest what is likely to happen next.

- Prescriptive analytics—using Big Data and complex simulations to answer questions about what the next steps taken should be.

With the right resources and enough time to explore such large data sets, predictive analytics can yield impressive results. For example, using their analytics engine, IBM AI can predict with 95 percent accuracy which employees will quit their job!

|

| IBM’s description of the volume, variety, velocity and veracity of information offered by Big Data (Infographic: The Four V's of Big Data). |

3. Analytics in education and eLearning

Why should I care about data analysis in education?

Ok, so it’s probably true to say that your organization doesn’t offer IBM-levels of analytics resources. But that doesn’t mean that education institutions are insulated from the growing integration of data into everyday life.

Tomorrow’s learners (and many of today’s) expect a personal learning environment—where lesson content, and the delivery of that content, is tailored specifically to their needs and preferences, based on their learner data footprint.

Likewise, the next generation of training professionals is far more likely to make use of tools allowing them to identify trends in their classes—taking an analytical approach to grades, learning objectives, and learner engagement.

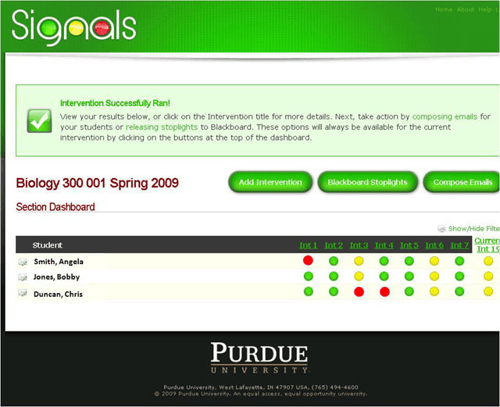

The truth is that analytics in education has been around for a fair amount of time already. In the mid-2000s, Purdue University introduced an analytics-driven traffic light system, which gave educators an instant visual snapshot of student progress.

More fundamentally, basic analytics has been used for decades at the exam level, where SAT and other test results are analyzed, not by looking qualitatively at the entire learning process, but through overall trends and summative snapshots of what has been learned.

|

| Purdue’s early forays into analytics in education (Source: Educause Review) |

Gap analyses

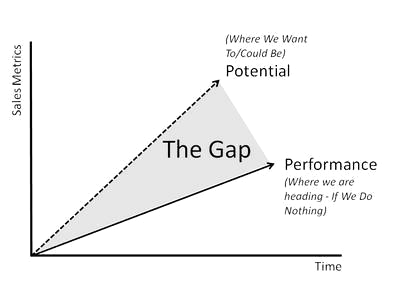

Perhaps the simplest way that analytics and education come together is through closing skills gaps.

You may be familiar with gap analyses as a tool for reviewing the difference between performance and potential—either for an individual or an organization.

It’s an effective way of asking questions about productivity and comprehension: how well do your learners understand their objectives, and how often do they actually meet them?

This kind of task is a perfect use of learner analytics. Identifying a skill gap is all about measuring an entity's ability to meet objectives, which—as we’ve seen above—is pretty much synonymous with developing a set of KPIs and running reports to find out how closely those objectives are being met.

|

| The gap between potential and actual market standing (marketing91.com). |

MUST-READ!—What does analytics mean for my eLearning business?

This is where the rubber really hits the road with learner analytics. If your organization invests at all in eLearning—or if you run eLearning as a business—analytics will help you develop your return on investment (ROI).

How? Well, let’s look at the wider picture of eLearning in education and training over the past decades.

Interestingly, the rise in analytics-based course design has coincided with a fall in the amount of resources organizations are spending on training their employees.

From 1979 to 1995, for example, the average amount of time a worker spent in formal training allocated to training fell from 2.5 weeks to 11 hours. Today, just under four out of five employees are not receiving on-the-job training at all.

At the same time, higher education institutions are hiring more IT and tech staff than ever before, with analytics-related roles such as programmers and system analysts accounting for nearly a quarter of new IT positions in 2018.

Across industries, the evidence indicates that most organizations are recognizing they no longer need to spend heavily on extensive training budgets, and are instead investing in specific, technical areas.

Why? Because using learner analytics to build and refine education programs gives designers clear insights on the most optimal content—what learners want, what teaching approaches they respond best to—as well as what parts of the program are not performing, and can, therefore, be cut.

Ready to make the same gains in your own organization? Start by watching our webinar on How To Utilize Data To Improve Operational and Learning Effectiveness.

The result is a more optimized, ROI-boosting eLearning course, which produces the same or better learning outcomes for less. Scroll down for three examples of how eLearning professionals are using data analysis in today’s educational environments.

4. Education analytics: three example uses

|

| (source: Charles Deluvio, Unsplash). |

Education analytics example #1: Analytics reporting on individual learner progress

Simple data tracking and reporting can greatly increase the value of a student or employee review. When you know exactly where a learner is excelling, and where they need more help, it becomes possible to skip straight to the actionable part of the meeting.

Here, it’s all about knowing the most beneficial metrics for eLearning and why you should track them. If you’re looking at analytics across a classroom, you might try to identify at-risk learners—allowing you to intervene at an earlier stage and close widening attainment gaps within a cohort.

By aggregating reports, it’s also possible to build a pretty undeniable evidential case, which is valuable for use in the event of a more serious performance review.

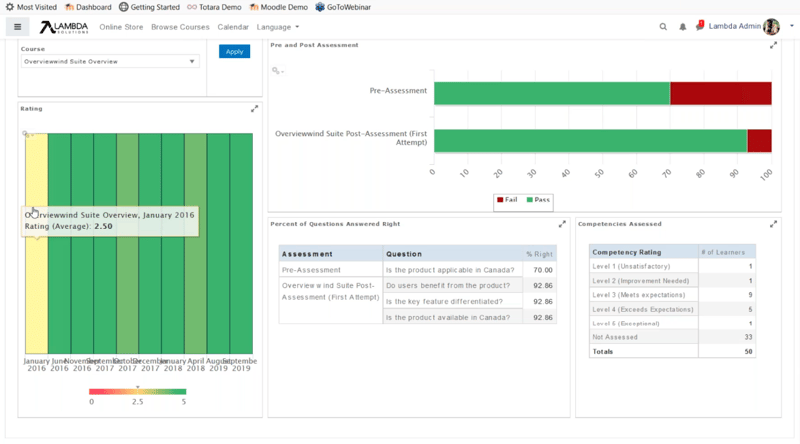

An example of a Lambda Analytics learner effectiveness dashboard. For instructors, dashboards can include info on course feedback, assessments, and competencies.

An example of a Lambda Analytics learner effectiveness dashboard. For instructors, dashboards can include info on course feedback, assessments, and competencies.

Education analytics example #2: Learning analytics for instructors

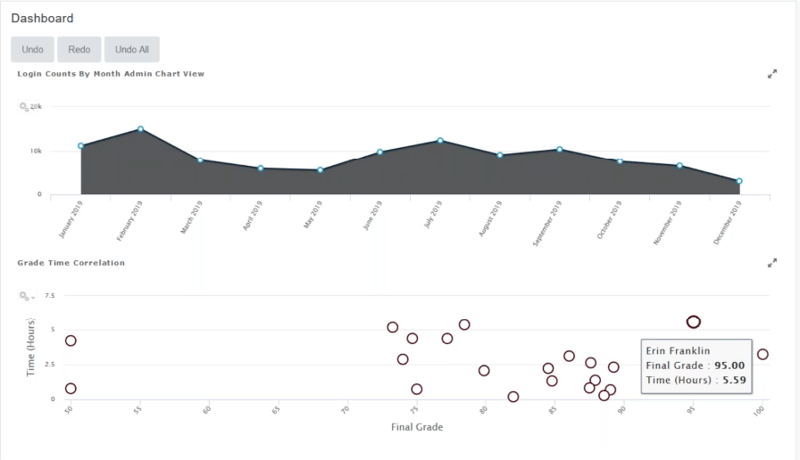

Nowadays, it’s common practice for instructors to run their own analytics reports, using their findings to improve their practice. Many institutions also support teachers with regular analytics updates, which provide a helpful diagnostic tool for identifying issues and informing future design.

When developing this type of data report, Lambda Analytics dashboards are probably the most engaging option. Each dashboard can speak to a different aspect of your program—filled with the most relevant data for a particular role, then shared wherever is easily accessible for instructors.

For example, a standard learner Effectiveness dashboard (see the image above) might include insights on course feedback, assessments, and competencies, month-to-month on a course. This information can then be embedded and updated periodically.

For this dashboard, a heatmap represents feedback over time, while pre- and post-course statistics are shown in a horizontal bar chart. But dashboards can combine any number of chart types and datasets—student subgroup comparisons, for example, such as BME or part-time students—whatever you need to help demonstrate how instructors’ approaches are playing out over time.

If you’re an instructor asking What Are The Most Important KPIs for LMS eLearning? you’ll probably want to check out this blog!

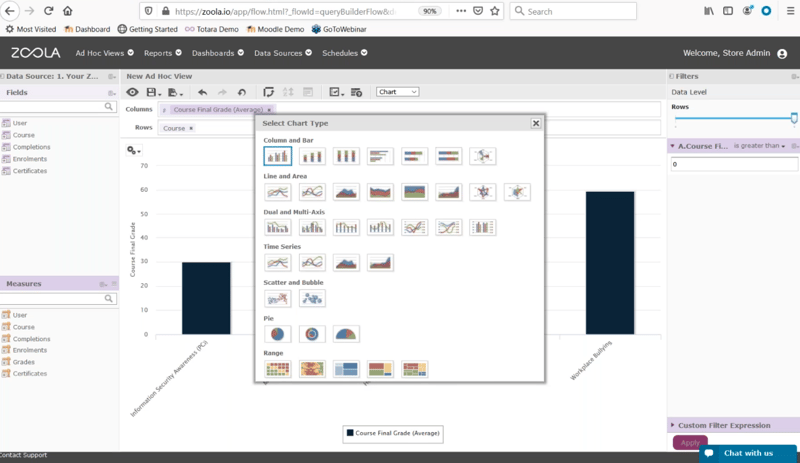

Use Lambda Analytics to select the chart type and format that will tell a persuasive data analytics story.

Education analytics example #3: Learning analytics for eLearning stakeholders

By now, it should hopefully be clear that analytics tools such as Lambda Analytics Dashboards have potential beyond a single KPI or learner objective.

The storytelling power of data analytics makes it one of the most effective ways of selling your education program to wider stakeholders. Isolated performance indicators—a single survey of student satisfaction, for example—can be difficult for people to meaningfully access, especially if they’re external to your institution. A full analytics report, on the other hand, lets you paint a vivid picture of learning by selecting any number of data points for presentation.

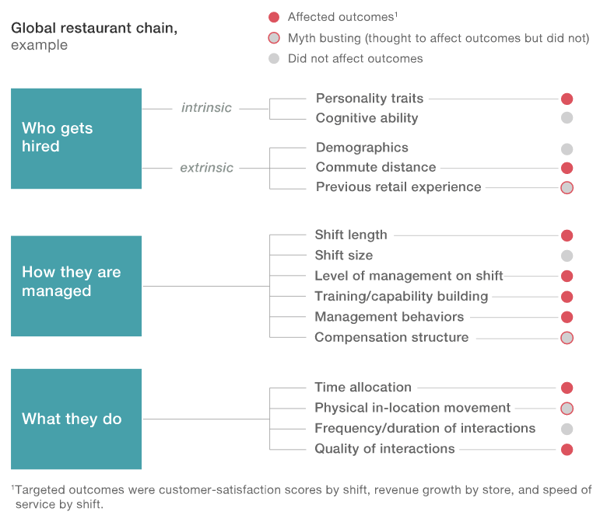

Analytics firm McKinsey&Company used people analytics to match employee features with desired retention outcomes (McKinsey&Company case study 2017, Using people analytics to drive business performance: A case study).

Analytics firm McKinsey&Company used people analytics to match employee features with desired retention outcomes (McKinsey&Company case study 2017, Using people analytics to drive business performance: A case study).

For management and eLearning stakeholders higher up the chain, predictive analytics can also be used to highlight faculty members and employees who may be at a higher risk of leaving their role.

Algorithms can compare known exit factors with data gathered through reviews, general performance, and qualitative surveys. The results then help highlight where attention and resources should be allocated in the hope of retaining the top teaching talent!

Keen to keep climbing down the Learning Analytics rabbit hole? Here’s what we’ve got for you:

-

eBook: LMS 101: Learning Analytics

-

eBook: The Practical Guide to Evaluating Your Workplace Learning Effectiveness

-

Webinar: How To Utilize Data To Improve Operational and Learning Effectiveness

-

Article: What Are The Most Important KPIs for LMS eLearning?

-

Article: The most beneficial metrics for eLearning and why you should track them

-

Article: Healthcare Analytics: Why Educators Are Using Blended Learning and Big Data in Healthcare

-

Article: Learning Analytics: The Art and Science of Using Data in eLearning